Background

The need for an ethical approach to the implementation of AI solutions has gained widespread acceptance in academic communities, the public sector and, increasingly, in commercial organisations. A considerable industry has emerged providing commentary and guidance on the topic.[1]

There has been less focus on an appropriate legal framework to regulate the implementation of AI solutions but, increasingly, this is seen as a key requirement. The Council of Europe recognises the need for legislation and will propose a strategic agenda which will include AI regulation. However, this will take time – a deadline of 2028 has been set for the process.[2]

In our initial note in this series on A Light Touch Regulatory Framework for AI[3] we argued for the need for a ‘light-touch’ regulatory framework for technology solutions using AI, with a primary focus on transparency obligations which would be dependent on the human impact of the AI solution. We suggested that the key components of the Light Touch Framework for AI should be organized around a transparency requirement relating to registrable (but not necessarily approved) AI solutions – with the assessment of the required level of transparency focusing on both technical and human importance assessments.

Our second note focused in more detail on the classification of AI solutions based on assessment of the technical quality and the human importance assessments of the solution[4]. We proposed an AI Index (Ai2) which relates to both the technical quality and the human importance of the solution with the assessments being carried out by the organisations developing the AI solutions. These assessments should be subject to public scrutiny pursuant to transparency requirements underpinned by statutory obligations. Transparency gives interested individuals and organisations an opportunity to review the AI solutions prior to and during the deployment and operation of AI solutions. Apart from for the most invasive AI solutions, where we recognise that an approval process will be necessary, citizen and third-party review is a more modern and flexible alternative to regulatory approvals processes which can be slow and bureaucratic.

In this article we examine these transparency requirements in more detail.

Public Transparency for AI Solutions

Online public transparency registers should be established on a statutory basis for AI solutions. These registers would hold and make publicly available information on AI solutions to enable the solutions to be subject to peer and interested citizen review. Sufficient information should be made available to enable meaningful scrutiny without requiring important confidential information to be disclosed.

There are useful parallels in the disclosure requirements for patent protection. Patent protection applicants are required to publish details of their inventions, giving sufficient details to enable any skilled person to operate the invention. Online databases ensure that this information is widely and easily accessible. The extent of the disclosure is sufficient to enable innovation and promote competitiveness. Patent disclosures are particularly important in the context of enforcement but patent disclosure is also now being used pro-actively by competitors and NGOs. As an example, Greenpeace, has made active use of patent application disclosures in scrutinizing biotechnology patents. We envisage that, in a similar manner, a transparency requirement for AI solutions will facilitate the scrutiny of AI solutions by a wide community of interested individuals and organisations.

There is no suitable current international legal framework for a transparency requirement for AI solutions. A legal framework needs to be developed. Again, patent protection provides useful parallels. The Patent Cooperation Treaty (PCT) assists applicants in seeking patent protection internationally for their inventions, helps patent offices with their patent granting decisions, and facilitates public access to a wealth of technical information relating to those inventions. Over time a similar Treaty could be developed for the regulation of AI solutions. This issue will be dealt with in more detail in our next instalment in this series.

It should be noted that transparency in this context does not necessarily equate to an “explainability” requirement. Explainability may require detailed information to be disclosed on the make-up of the AI solution that would be sufficient to demonstrate causality in the outputs of the AI solution on a replicable basis.[5] The transparency requirement does not need such an extensive level of disclosure. It relates to assessments of the outcomes of the operation of the AI solutions and does not necessarily need transparency of the fundamental operational details of the AI solution.

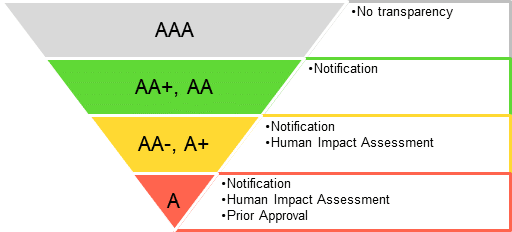

Figure 1. Degree of transparency dependent on the position in the AI Index

Transparency requirements

1. Summary

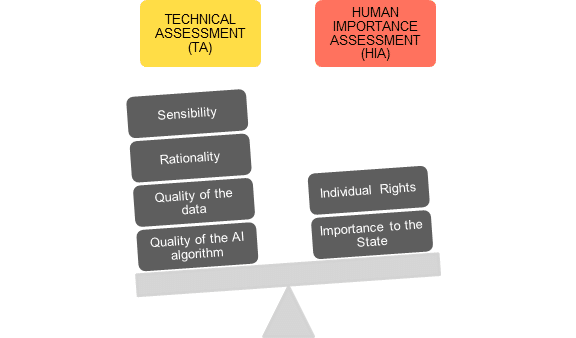

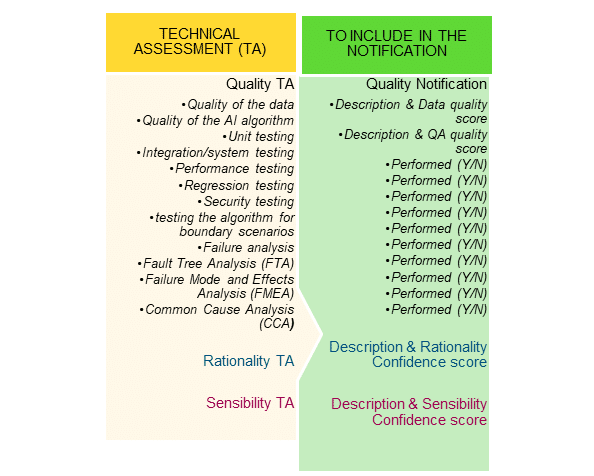

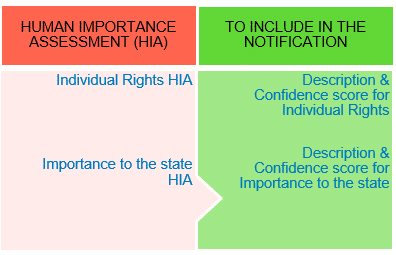

Both the technical quality (Technical Assessment – TA) and the human importance (Human Importance Assessment – HIA) of AI solutions are used to derive the positioning of an AI solution on an AI Index (Ai2). The positioning of the solution on the AI Index then governs the extent of the required disclosure. The assessments will also provide most of the information needed to be disclosed.

We contest that even though the Technical Assessment will require full access to the IP rights and trade secrets contained in the AI solution, for example algorithms used and the source code for an AI solution, the required disclosure should not involve IP and trade secrets disclosure. Due to this distinction, the Technical Assessment should be done by the organization internally (through its executive and engineering teams) in order to determine the AI Index (Ai2) assessment.

Figure 1 provides a schematic of the various aspects of TA and HIA (as discussed in our previous article[6]. We use it as a base to derive the transparency requirements.

The transparency requirement operates on a “sliding scale” of transparency – from no transparency for the most basic AI solutions, through: (i) notification with the required degree of transparency; (ii) notification and human impact assessment; and (iii) notification, human impact assessment and prior approval for the most critical AI solutions (see figure 2).

Figure 2

Key:

- AAA: These AI solutions fall below minimum threshold, with no need for the transparency requirements. Examples are online shopping, drug discovery, predictive diagnosis – heart (ML), collaboration apps, CRM, farm management.

- AA+ or AA: When the AI solution exceeds minimum threshold, the transparency requirements includes notification only as defined in the next section. Examples of such solutions are predictive diagnosis for diabetes (DL), credit card fraud detection, smart speakers, enterprise software, ERP, insurance, line of credit calculation, dating apps, recruiting screening, cyber security and AI chips.

- AA- or A+: An AI solution that exceeds “intermediate” threshold involves notification (as above) and the conduct of an Impact Assessment. Examples are civil justice, targeted advertising – electoral, predictive diagnosis, weather forecasting, smart grid automation, airgap network for nuclear power plants, intelligent railway routing and aircraft avionics.

- Prior Approval is also required. We cover this in some details below. Examples of such AI solutions are criminal justice, facial recognition for law enforcement, autonomous weapons, cyber warfare, surveillance system and defense drones.

2. Notification requirement

AI Solutions that exceed the minimum notification threshold will require a minimum level of disclosure. The information required for disclosure will be derivable from the Technical Assessment (TA) and the Human Importance Assessment (HIA) but without making any important IP or trade secret disclosures. The notification requirement should also include the scores and related descriptions for TA (Quality, Rationality and Sensibility) and HIA (Individual Rights and Importance to the State). Refer to Table. 1 and 2 for summary of TA and HIA notifications.

Table 1: Summary of TA Notifications

a) Notifications for TA: Quality

The quality information to be disclosed will include two scores: one indicating the quality of the algorithm used and the other indicating the quality of the training data used to train an AI solution (if a data driven AI algorithm is used). Descriptions covering the background for such scores will also be required.

The description of the quality of the data should include details of the richness of the data that includes boundary scenarios and bias, if applicable. It should also include details of the data sources used by the algorithm. Such details should not amount to disclosing any significant IP and trade secrets disclosure even if the data sets used are proprietary since disclosure of the actual data sets used is not required. In general, disclosing the data used for a data driven AI algorithm will not be possible due to privacy issues related to the makeup of the data itself. For example, privacy issues would be a significant concern if the training data includes patient healthcare data that includes patent’s personal identifiable information (PII).

The description of the quality of the AI algorithm should also include at least the quality assurance processes performed and details of how the AI algorithm has been tested in a sandboxed environment, including all boundary scenarios and any identified bias.

Other aspects of the AI algorithm quality that should be disclosed include details about the AI behavior and how the AI behavior may change “in flight”. Such description for proprietary AI algorithms may relate to significant IP or trade secrets and these specific items should be excluded from the disclosure.

(b) Notifications for TA: Rationality and Sensibility

The rationality assessment and sensibility assessment can manifest as two different confidence scores to satisfy the notification requirement. Disclosure of the algorithm is not necessary as long as there is an acceptable confidence score in order to accompany the required descriptions. However, in many cases, an organisation may want to disclose the AI algorithm itself if it is not proprietary to promote greater transparency and confidence in the AI solution.

Many technology companies base their business model on monetizing the services and products built around AI algorithms that are already open source. In these situations the commercial or societal value of the AI algorithm is marginal compared to the benefit of receiving customer and end-user confidence by disclosing the AI algorithm.

A good example of how the principles that underpin the operation of an algorithm can be identified without disclosing the actual algorithm (although not in an AI solutions context) is provided in the public consultation carried out by the UK schools examinations authority OFSTED over the use of algorithms for the 2020 UK schools public exam replacement process (caused as a result of the Covid-19 epidemic).[7] Although the formal process of the consultation failed to identify the problems that would arise in practice as a result of the use of the algorithm to predict student exams grades, the consultation process indicates that the principles underpinning algorithms can be described in a publicly accessible manner. (The limitations on the effectiveness of public disclosure indicated by this OFSTED consultation will be considered in more detail in a later article in this series).

The notifications for rationality and sensibility should include information relating to the objectives of the AI solution, including information answering the questions:

- The problem that the AI is aiming to solve in the product?

- What is the AI algorithm calculating to solve the problem?

- Does AI decision making and/or operation form a major part of the product operation?

In all circumstances the potential compromise between transparency and confidentiality will need to be considered. In short, the description of the rationality and sensibility assessments may in certain cases involve IP and trade secret disclosures.

Table 2: Summary of HIA Notifications

(c) Notifications for HIA: Individual Rights

AI solutions that will have a significant impact on individuals should have a greater level of scrutiny than those which solely facilitate business processes. There will inevitably be a great deal of subjectivity around the concept of what may constitute a “significant impact on individuals”. What is tolerated by many people may be regarded as deeply intrusive by others. Individuals also tend to accept intrusions into their liberties over time and so may not even realise that they are being impacted. This problem is particularly significant in the context of algorithmic bias arising from training data bias. Training data bias often simply reflects the state of society, such as gender and race bias.

A basic framework can be established building on the UN Declaration of Human Rights. The framework should review the extent to which there are risks that the operation of an AI solution should result in any of the basic human rights identified in the UN Declaration becoming jeopardised. If there is a risk that any of these basic human rights could be jeopardised the AI solution should be marked with an enhanced risk profile.

As an example, Article 7 of the Declaration provides that:

All are equal before the law and are entitled without any discrimination to equal protection of the law. All are entitled to equal protection against any discrimination in violation of this Declaration and against any incitement to such discrimination.

In the light of Article 7, as an example AI solutions relating to criminal justice need to be assessed in order to determine if they could result in people of specific groups or categories (e.g. race) being “targeted” by the public or other law enforcement bodies. If so, the AI solutions should have an enhanced rating on the AI Index

(d) Notifications for HIA: Importance to the State

The concept of the importance to the state varies from country to country in accordance with state’s political and societal nature. This clearly depends on the nature of the state’s regime and this article is not the place to agree or disagree with political regimes across the world.

As an example, a reasonably un-controversial topic of importance to the state is the maintenance of public order. It is in the interests of most states, most of the time to maintain public order. AI solutions can be assessed against these criteria and where an AI solution poses public order risks then they would clearly be given a higher HIA rating.

There would not necessarily need to be a direct connection with public order for the risk assessment to be made. A direct public order connection would relate to AI solutions which could incite elements of the population to engage in violent or disruptive activities. (Of course, there is a “fine line” between these types of solutions and solutions which may identify current injustice and encourage citizens to engage in peaceful protests). An example of an indirect public order connection could relate to an AI solution which has the aims to – beneficially – economise on the need for resilience back up in national energy supplies. If such a solution were to be implemented and it does not operate effectively it could have a public order impact by severely disrupting energy supplies to areas of the population.

In the United Kingdom there has already been a legal case relating to the use of AI solutions and the potential conflict between the importance to the state and individual rights. This case (Bridges v South Wales Police) related to the lawfulness of the use of facial recognition technology to screen against “watchlists” of wanted persons in police databases at football matches at Cardiff football ground.[8] On appeal the English Court or Appeal held that the use of facial recognition technology by South Wales Police was unlawful and did not comply with Article 8 of the European Convention on Human Rights (the right to respect for private and family life). The Court did not rule out facial recognition technology as such but held that both Data Protection Impact Assessment and the Equality Impact Assessment carried out by South Wales Police were inadequate and, in particular, that the Equality Impact Assessment carried out was “obviously inadequate” and failed to recognise the risk of indirect discrimination.

2. Impact Assessment

AI solutions that exceed an “intermediate” notification threshold should also be subject to an additional impact assessment transparency requirement. This will TA and HIA notification (as above) and the conduct of an Impact Assessment.

The Canadian Government has developed an algorithmic impact assessment tool which gives a helpful indication of the issues that need to be assessed. The Canadian impact assessment is comprised of 4 major sections:

- Business case – what are the key business objectives of the tool? Are you trying to speed things up? Are you trying to clear a backlog of activities? Are you trying to modernize your organization?

- System overview. What’s the main technological foundation? Image recognition, text analysis, or process and workflow automation?

- Decision oversight. Is it related to health, economic interests, social assistance, access and mobility, or permits and licenses?

- Data source(s). Is it from multiple sources? Does it rely on personal (potentially identifiable) information? What’s the security classification, and who controls it?

The impact assessment is only required where the AI Index (Ai2) is AA- or above. Examples are civil justice, targeted advertising – electoral, predictive diagnosis, weather forecasting, smart grid automation, airgap network for nuclear power plants, intelligent railway routing and aircraft avionics.

The key purpose of the impact assessment is to provide a framework for AI practitioners to be able to assess the human and societal impact of the AI solutions that they develop in a more systematic manner. By using the impact assessment AI solution developers will have a more formalised understanding of these human and societal impact issues that would not necessarily otherwise have been identified as part of a technical and commercial development process.

As a matter of good corporate governance organisations will want to publicise the outcome of these impact assessment exercises. This will provide business and public confidence in their AI solutions. We would expect that over time, journalists and engaged citizens will take an increasing interest in the conduct of these impact assessments and so they will have a powerful impact on the development of responsible AI solutions.

3. Prior Approval

For the most critical and sensitive AI solutions with a high human importance assessment, which are allocated an “A” assessment on the AI Index, should be subject to a regulated prior approval mechanism similar to the prior approval of medicinal drugs. These include AI solutions relating to criminal justice, facial recognition for law enforcement, autonomous weapons, cyber warfare, surveillance system and defense drones.

In the medicinal drugs context the approval of a drug is made – in general – where data on the drug’s effects have been reviewed by the relevant regulatory body and the drug is determined to provide benefits that outweigh its known and potential risks for the intended population.

The drug approval process takes place within a structured framework that includes:

- Analysis of the target condition and available treatments— reviewers analyze the condition or illness for which the drug is intended and evaluate the current treatment landscape,.

- Assessment of benefits and risks from clinical data— reviewers evaluate clinical benefit and risk information submitted by the drug maker, taking into account any uncertainties that may result from imperfect or incomplete data. Evidence that the drug will benefit the target population should outweigh any risks and uncertainties.

- Strategies for managing risks—all drugs have risks. Risk management strategies are assessed, and how the risks can be detected and managed.

In the AI context it has been suggested that “an agency (not necessarily a new one) independent of government which has statutory authority to intervene to apply appropriate sanctions in the event of misuse of algorithms”.[9] We suggest that this approach should apply to the most critical and sensitive AI solutions with an “A” assessment on the AI Index.

A three stage approval process should be applied:

- Sensitivity Assessment – a standardised assessment of the intended use and the severity of consequences to the subject of the system when recommendations or decisions go wrong.

- Risk Assessment & Approval – a detailed review of the risks and benefits of the proposed AI solution, together with evidence to support the usage of the AI solution (as is provided by clinical trial data in medicinal drug approval processes), including:

- input data accuracy,

- transparency on the use of algorithms, decision transparency and other AI tools used in the make-up of the AI solution

- processes surrounding the intended use of the AI solution algorithm, including the processes for communications with users and complaints and remediation process.

- following the risk assessment, the AI solution would be given an approval as long as the AI solution meets the required standards. In order to be effective and not hinder or stifle innovation, the risk assessment processes would need to be carried out by personnel with a high degree of understanding and competence in the field of AI, and with sufficient resources in order to be able to carry out the risk assessments without introducing inordinate delays into the implementation life-cycle.

- On-going monitoring and assessment. As with medicinal drug approvals process, there will be need and an opportunity for the regulator to be able to intervene when things go wrong.

Summing up

We argue that public transparency within a regulatory framework will enable the meaningful scrutiny of AI solutions which will both provide public protection within a legal structure and will enable innovate to flourish. Transparency will encourage AI developers to produce higher quality solutions. Public scrutiny will expose poor quality AI solutions and those that could have harmful consequences. Transparency can be achieved without requiring important confidential information to be disclosed.

There are parallels between this approach to public transparency for AI solutions and the “community” aspects of open source software which includes companies, the public sector and private individuals. Organizations have been established to provide management and supervision of open source solutions, such as the Linux Foundation. We envisage that a parallel “community” involvement will develop in the review and scrutiny of AI solutions.

In the next article in this series we will explore in more detail the extent to which transparency will provide opportunities for meaningful scrutiny of AI solutions

Roger Bickerstaff and Aditya Mohan

About the authors:

Roger Bickerstaff – is a partner at Bird & Bird LLP in London and San Francisco and H

Honorary Professor in Law at Nottingham University. Bird & Bird LLP is an international law firm specializing in Tech and digital transformation.

Aditya Mohan – is a founder at Skive it, Inc. in London and San Francisco. He has research experience from Intel Research, IBM Research Zurich, MIT Media Labs and HP Labs. He studied at Brown University and IIT. San Francisco’s Skive it, Inc. with additional registered office in the United Kingdom is a Deep Learning company building autonomous machines that can feel.

[1] See https://papers.ssrn.com/sol3/papers.cfm?abstract_id=3518482

[2] See https://www.coe.int/en/web/artificial-intelligence/director-jan-kleijssen

[3] See https://digitalbusiness.law/2019/11/a-light-touch-regulatory-framework-for-ai/

[4] See https://digitalbusiness.law/2020/09/a-light-touch-regulatory-framework-for-ai-part-2-classification-of-ai-solutions/

[5] See https://royalsociety.org/-/media/policy/projects/explainable-ai/AI-and-interpretability-policy-briefing.pdf

[6] See https://digitalbusiness.law/2020/09/a-light-touch-regulatory-framework-for-ai-part-2-classification-of-ai-solutions/

[7] See https://assets.publishing.service.gov.uk/government/uploads/system/uploads/attachment_data/file/879627/Exceptional_arrangements_for_exam_grading_and_assessment_in_2020.pdf

[8] See https://www.judiciary.uk/wp-content/uploads/2020/08/R-Bridges-v-CC-South-Wales-ors-Judgment.pdf

[9] https://www.techdotpeople.com/post/should-algorithms-be-regulated-like-pharmaceuticals